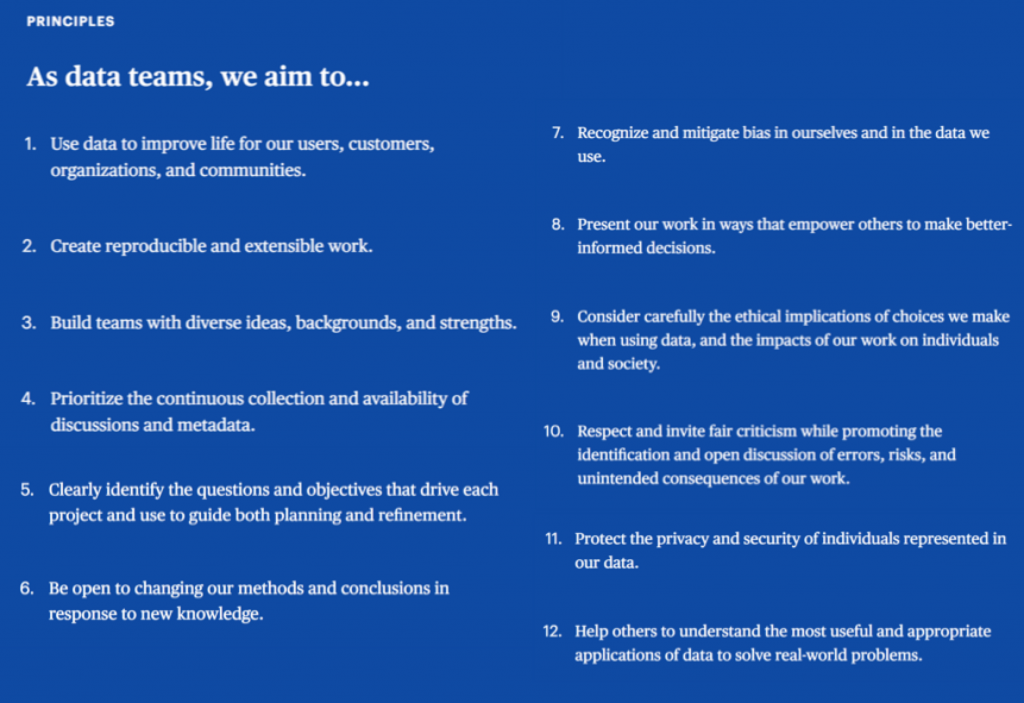

Yesterday evening I started teaching a new data visualization class at the University of Washington’s Continuum College. I’ve been teaching this class for a few years now, but this time I decided to add a Data Ethics segment to the first 3 hour session, along with the obligatory course overview, grading criteria, distribution of software licenses, etc, etc. I based the segment on the Manifesto for Data Practices that I was fortunate enough to be able to co-author with a few dozen other professionals at the Open Data Science Leadership Summit led by the good folks at data.world last year (videos of the summit are available here).

Data Ethics is a topic many people are talking about these days, following the long parade of news headlines over the past few years of user privacy debacles, major data breaches, and evidence of machine learning algorithm biases. The data world is in dire need of an ethics overhaul, and we all know that.

But what, exactly, is needed? Not another code or set of guidelines or manifesto, surely. Like our group last year, others have published their own (ACM, ASA, IEEE, The UK, Google). Not a lot of hand-wringing or virtue-signalizing that even the most egregious offenders can participate in and even hide behind. So what, then?

Today I took an hour to read through “Ethics and Data Science” – a free ebook written by Hilary Mason, DJ Patil, and Mike Loukies and recently published by O’Reilly Media. I applaud these authors and O’Reilly for putting this book out there for free. We need more of that.

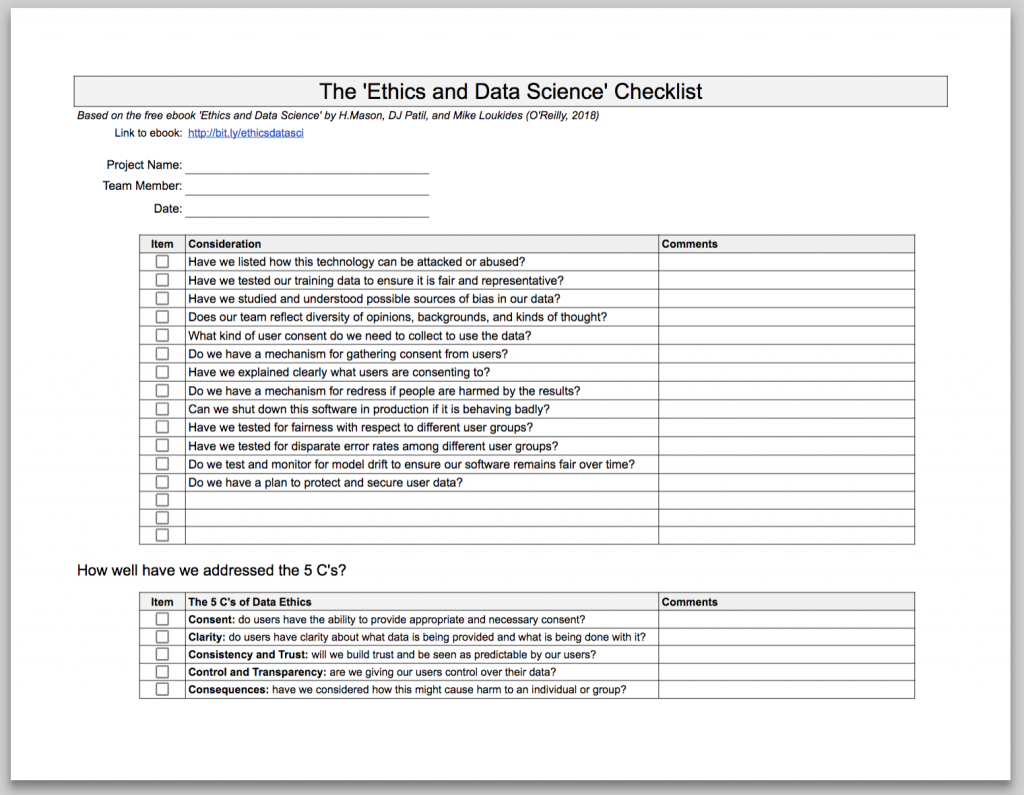

What I really like about the book is its focus on the practical side of implementing sound ethical principles. The authors point out that the ethical guidelines related to using data are all there, and some of them have been there for decades. Often times the offending software or algorithm has been created by very well-intentioned developers who are working on insane timelines, who don’t have a mechanism to stop the train from leaving the station if they notice a problem, and who are working in corporate cultures that don’t put a premium on requirements that deliver on ethics.

One proposed remedy? A checklist and the 5 C’s (Consent, Clarity, Consistency, Control and Consequences). They talk about the ways checklists have helped in other fields – medicine, manufacturing – and that adding such a tangible process step can help us increase the chances that we don’t do harm with our data work. I took the liberty of putting their checklist items into a Google Sheets file that you can save to your own Google Drive and modify and use as you see fit:

Another proposed remedy? Talk about it. All the time and everywhere. Even at the dinner table:

It’s an critical topic that we need to get right in the next few years. The algorithms and technology that surround us are about to get much more sophisticated and have even more control over our world in the next decade. As the authors of the book point out, we can either work to make sure that what we end up with is a technology utopia, or we can let it run away from us and we can have a technology dystopia on our hands.

Data ethics is the key to which way it goes.

Thanks for reading,

Ben